ComfyUI + ROCm 7.1 + PyTorch 2.8 + Strix Halo

Install ComfyUI with ROCm 7.1 and PyTorch 2.8 on an AMD Strix Halo system running Ubuntu 24.04 for Stable Diffusion image generation without an NVIDIA GPU.

ComfyUI on Strix Halo — ROCm 7.1 Setup Guide (No NVIDIA Needed)

Can this combo make ComfyUI usable with the Strix Halo?

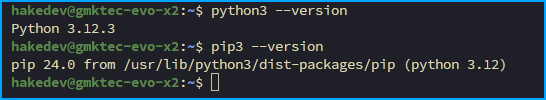

Before starting, you should have Python 3.12 and pip3 installed, and ROCm 7.1 set up on your Strix Halo system running Ubuntu 24.04.

Alright let's jump into it. You should have python 3.12 installed along with pip3. You can verify with

python3 --versionand

pip3 --version

Now clone down ComfyUI

git clone https://github.com/comfyanonymous/ComfyUI.gitThen cd into the directory

cd ComfyUISet up the virtual environment with venv

python3 -m venv venvEnter the environment

source venv/bin/activateUpgrade pip

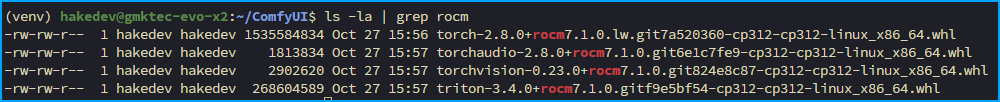

pip3 install --upgrade pip wheelDownload the wheel files for torch, vision, triton and audio (You can paste this all in at once)

wget https://repo.radeon.com/rocm/manylinux/rocm-rel-7.1/torch-2.8.0%2Brocm7.1.0.lw.git7a520360-cp312-cp312-linux_x86_64.whl

wget https://repo.radeon.com/rocm/manylinux/rocm-rel-7.1/torchvision-0.23.0%2Brocm7.1.0.git824e8c87-cp312-cp312-linux_x86_64.whl

wget https://repo.radeon.com/rocm/manylinux/rocm-rel-7.1/triton-3.4.0%2Brocm7.1.0.gitf9e5bf54-cp312-cp312-linux_x86_64.whl

wget https://repo.radeon.com/rocm/manylinux/rocm-rel-7.1/torchaudio-2.8.0%2Brocm7.1.0.git6e1c7fe9-cp312-cp312-linux_x86_64.whlYou can verify all files have been downloaded by running

ls -la | grep rocm

Now install everything

pip3 install torch-2.8.0+rocm7.1.0.lw.git7a520360-cp312-cp312-linux_x86_64.whl torchvision-0.23.0+rocm7.1.0.git824e8c87-cp312-cp312-linux_x86_64.whl torchaudio-2.8.0+rocm7.1.0.git6e1c7fe9-cp312-cp312-linux_x86_64.whl triton-3.4.0+rocm7.1.0.gitf9e5bf54-cp312-cp312-linux_x86_64.whlFinally install the ComfyUI requirements

pip3 install -r requirements.txtEverything has now been installed! You can run ComfyUI now

python3 main.py --listen 0.0.0.0Open a browser and navigate to the IP of your Ubuntu PC with port 8188. For me that was http://192.168.10.227:8188/

Conclusion

I'm not a ComfyUI pro, especially with AMD, but this seemed to work fairly easily. I'm going to be using ROCm 7.1 for Ollama regardless, so I just wanted to make sure it wasn't going to be a headache to get set up.